Spokes v1.3.1 and Client v0.11.1

Published at September 12, 2021 · 8 min read

Share on:Tody we’ve published software updates to Spokes and the Packetriot client program to enable convenient access to distributed services hosted behind by other tunnels connected to a Spokes server. Access to external services can be mapped as well.

These new features establish an application-level mesh network similar to what Envoy or Linkerd provide. This enables developers to write programs that can access ports on 127.0.0.1 and connect to the actual service running on a different network or on completely different compute infrastructure.

Spokes

Revision v1.3.1 of Spokes introduces support for allowed external connections via the SOCKS server, application mesh communication, new API endpoints for tunnel search and pagination, and a form on our dashboard for performing search.

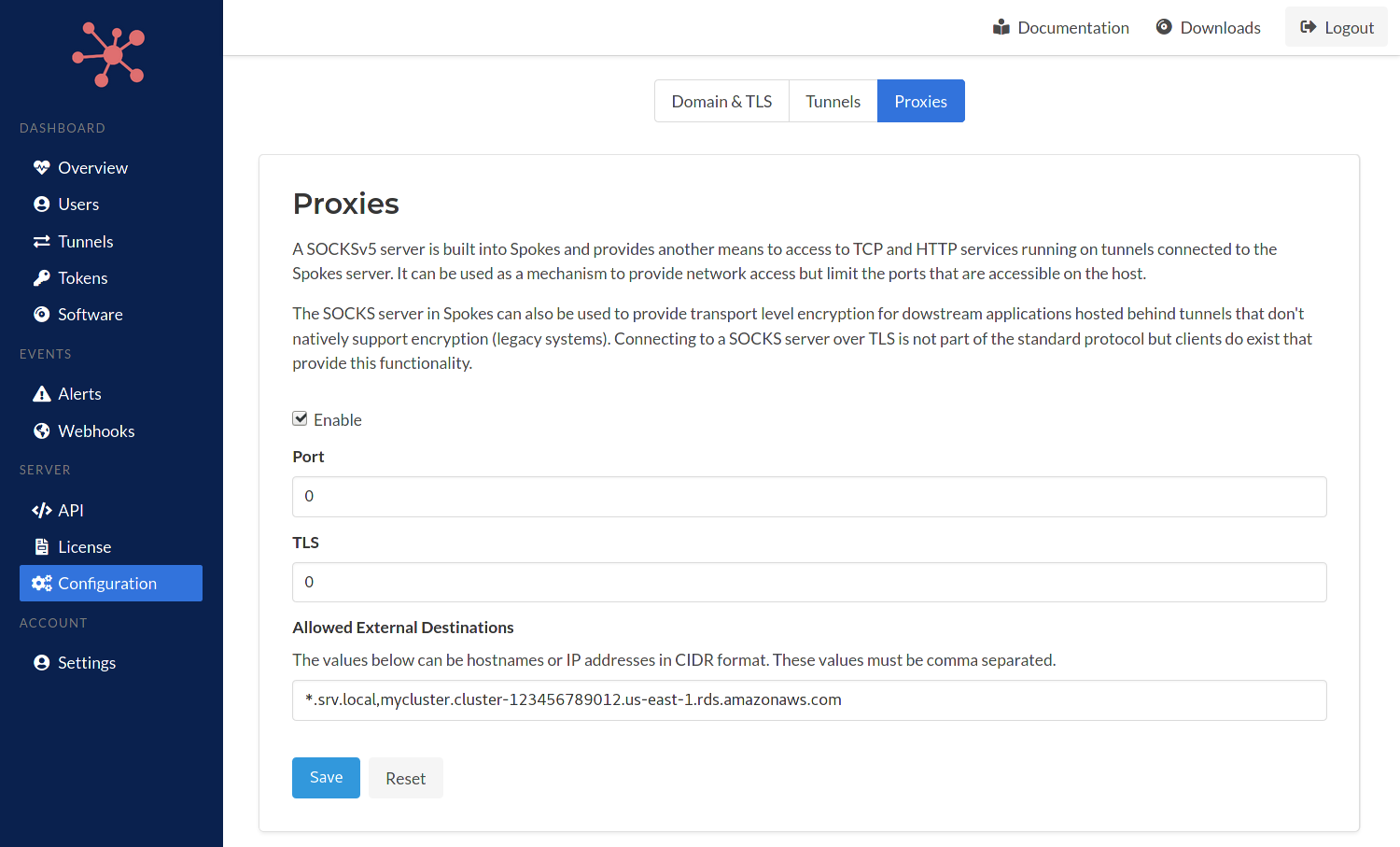

Allow-list for External SOCKS Destinations

We have added a new field and input to the SOCKS server configuration to add IP networks (CIDR) and hostnames as valid external destinations for SOCKS connect requests. This enables programs on the other side of clients to access resources that may be hosted alongside a Spokes server such as containers or hosts that are on private networks.

Valid destinations are identified using CIDR for IP networks, e.g. 172.16.0.0/24. Hostnames can be used as well internal-app-a.srv.local and also wildcards for hostnames as well *.srv.local.

Server Configuration Proxies

Connections to SOCKS server can be establish using the plain-text (standard) or TLS port to communicate a SOCKS CONNECT request. A SOCKS listener on the Packetriot client that will map a local port to listen for requests and forward to Spokes or map a local port for a specific service.

The destination in the CONNECT request will be evaluated against the allow-list first. If its not allowed it will check if the request is a service hosted behind a tunnel that is online. We plan to add more access control features to manage which tunnel can map to other distributed services and allow traffic.

This feature will enable Spokes to be integrated with more applications that are deployed alongside it and connect applications without requiring them to be exposed to the public Internet.

Application Mesh Network

We’ve added the ability to access services hosted behind tunnels, from other tunnels. With Spokes, the data channel portion of a tunnel is established in a reverse fashion. Tunnels (clients) authenticate and then set up several data connections by requesting WebSocket upgrades and then pooling them for use to transfer data from Internet clients to services hosted behind the tunnel. The request routing performed on the client-side of the tunnel is done by implementing a Resolver.

Resolvers on the server (Spokes) side have always be nil. This meant any connections from the client-side would be dropped. We implemented a new resolver that is used by Spokes to route connection request from client tunnel to other services. This enables connecting an application on a host A to a service running behind a tunnel on host B.

Let’s say that host B is running a redis server and it has allocated a TCP port 22123 on the Spokes server and is forwarding that to the redis server. A user on host A can access this server by running the following command:

[user@host ] pktriot --config /path/config.json ports map 6379 \

--transport tcp --destination redis --port 22123

[user@host ] pktriot --config /path/config.json start

Now any program on host A can access 127.0.0.1:6379 and connect to the redis server running on host B. This makes connecting remote applications and clients secure and simple since the transport using TLS connections from the tunnel clients to the Spokes server. No services such as redis, databases or other servers have to be exposed to the public Internet to provide distributed access to it.

The SOCKS server will need to be enabled in the Spokes server confiuguration, however, you do not need to open any plain-text or TLS ports for the SOCKS server. It can exist as a virtual internal server.

We’ll provide more on port mapping will be described in the client update below.

API Endpoints

We’ve added new endpoints for performing search across tunnels using terms, operating system, version and CPU architecture. This allows callers to now lookup a tunnel instead of searching through them to find the tunnel of interest. This new endpoint is available at /api/admin/v1.0/tunnel/search. More information is available in our Spokes API docs.

All endpoints that return tunnel objects such as /api/admin/v1.0/tunnel/list/active, /api/admin/v1.0/tunnel/list/online, and the others, will now return paginated results when the number tunnels is over 50. Pages results are stored in a new property called link in the response object.

These link objects are described in more detail in our docs as well. Each page is assigned a unique ID and is cached for 720 seconds. This enables the caller to process tunnel objects in chunks instead of reading all the results.

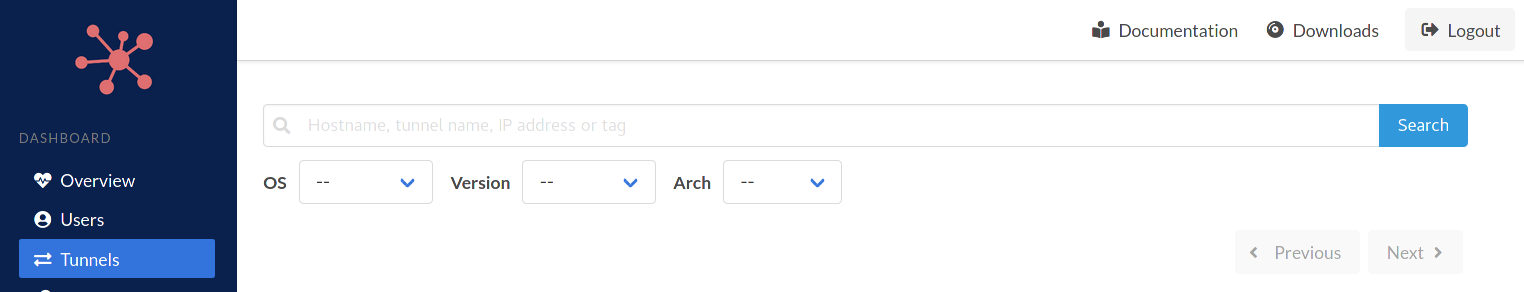

Tunnel Search

We implemented a tunnel search form in the Spokes dashboard. This is built using the same endpoint we implemented the tunnel search API.

Tunnel Search and Filters

In the future we intend to add “actions” to the results. For example, an admin may want to search for a particular version of online clients and perform a software update. Other actions can be reverting to versions installed on the hosts or shutting the tunnels down.

Packetriot Client Updates

Revision v0.11.1 of our client program has been updated to support mapping local ports to remote tunnel services. This enables simple connections between a tunnel running on a host a service that is running behind another tunnel.

As a convenience we added a generic SOCKS server in the client that will listen for requests on a local port and forward them to Spokes and enable a single port for requesting a variety of services hosted behind tunnels or external services.

Port Mapping

A new command has been added to the pktriot clien programs - pktriot ports. This command is used to map local ports to a remote service that is identified using the transport (tcp), destination (hostname or IP) and destination port. It’s important to note that this command only works with customers that have deployed their own Spokes server. This command does not function for customers using our SaaS.

For example, a mariadb db server may be running on a remote host that is privately exposed using a tcp traffic rule that forwards port 22123 to the local socket listening on 127.0.0.1:3306. An application we’ve deployed somewhere else may need to access it. We can do that by mapping tcp:mariadb:22123 to a local listening port. This is the command:

[user@host ] pktriot ports map 3306 --transport tcp --destination mariadb --port 22123

For services hosted behind tunnels that are not HTTP-based, e.g. listen to port 80 or 443, the destination hostname is not actually used to locate the resource. However, you can also map a port to an external service, like a AWS Aurora. Aurora can host MySQL or Postgres database in the cloud. You can map a local port to this service by:

- Add the DB endpoint to the SOCKS allow-list in Spokes

- Use the

pktriot ports mapcommand to map a local port to the DB endpoint

Here is an example:

[user@host ] pktriot ports map 3306 --transport tcp \

--destination mycluster.cluster-123456789012.us-east-1.rds.amazonaws.com --port 3306

The new ports command and Spokes v1.3.1 will enable you to map local listening ports to service hosted behind tunnels or cloud deployed services and make them all appear as if they are local resources. This will simplifiy the configuration for services that are deployed in remote location, container environments, or for local development environments for your development team.

Below are examples of listing defined port mappings and and removing them.

[user@host ] pktriot ports ls

+-----------------------------------------------------------------------+

| Local Port | Transport | Host | Destination Port |

+-----------------------------------------------------------------------+

| 5000 | tcp | docker.srv.local | 5000 |

+-----------------------------------------------------------------------+

| 3306 | tcp | mariadb | 221234 |

+-----------------------------------------------------------------------+

[user@host ] pktriot ports rm 5000

Removed port mapping

We’re planning on publishing tutorials and examples that can be used to help illustrate all the possibilities of using local port mapping to simplify your deployed applications.

Please feel free to reach out to us if you have any questions about this feature.

SOCKS

A SOCKSv5 listening port has also been added to the client as well. This feature is similar to port maps and is available to customers that are hosting a Spokes server.

The SOCKS listener built into the client will forward all requests to the Spokes server and they will be processed there. This means that destinations are limited to services hosted behind tunnels and external destinations that are allowed. This feature is useful if you want to avoid mapping lots of local ports and prefer using SOCKS as an interface for lots of services that may be distributed elsewhere.

Here is an example of setting up the local SOCKS listener:

[user@host ] pktriot proxies socks listen 1080

You can now point SOCKSv5 clients to 127.0.0.1:1080 and make requests to service running remotely behind other tunnels or external destinations and applications that you’ve allowed in the Spokes server configuration.

You can remove the SOCKS listener with this command:

[user@host ] pktriot proxies socks rm

Summary

This is an excisting release for Spokes. We’re beginning to provide features in Spokes and our client to support mesh networking scenarios for our customers.

Mesh networking can be complicated and intimidating to configure. A mesh network is normally implemented with several services that require knowledge and experience to understand them and the infrastructure they are deployed on.. We want to simplify mesh networking and will continue to expand our support for those use-cases.

Our roadmap includes more fine-grained access controls for connecting to applications across the mesh network. Please follow us on Twitter for updates and support. Email support is welcome as well. Thanks to our customers for sending us their inputs and feedback, you continually help us improve our products and enable us to also improve your experience with them.

Thanks!